Recently, I was reading an article from one major manufacturer of high-end cars in which the company was politely bragging about their “zero-bug policy” in their software development process. A claim that undoubtedly captured my attention. While the article started bold and promising, it quickly mutated into some acquiescence:

With ‘zero-bug’, they actually meant zero bugs that *are known*. Well, following that reasoning, all software that we develop has zero bugs…if we don’t know them.

One thing was spot on in the article though; bugs are unavoidable. Firstly, because we are fallible. And second, because, as software developers and testers, we can never foresee all the scenarios and situations our software will encounter once released. And some of those unforeseen scenarios might (will) surely cause bugs to reveal themselves. Therefore, as much as we spend on complex testing suites or the best testing talent possible (which are good investments by the way) we can never fully cover all potential situations. Take commercial aircraft, with millions of lines of code running on-board. Airliners are tested very thoroughly as we all can imagine. Still, nasty bugs happen. See the case of Flight 2904 in Warsaw, Poland where an A320 overran the runway at Okęcie International Airport on 14 September 1993. Although the cause of the aircraft failing to brake in time was due to a combination of factors—including human factors—this accident involved certain design features of the aircraft. On-board software prevented the activation of both ground spoilers and thrust reversers until a minimum compression load of at least 6.3 tons was sensed on each main landing gear strut, thus preventing the crew from achieving any braking action by the two systems before this condition was met. But because of the wind conditions present that day—which impacted the way the A320 approached the runway—the aircraft stayed for a few seconds standing only on one side of the main landing gear; precious seconds of braking time lost which directly contributed to the incident. A scenario that clearly falls way outside of the typical testing strategy for this kind of system.

But, in fact, today we are not going to talk about these kinds of software bugs. We spoke so far about a kind of software bug that always takes the spotlight because it causes considerable losses and/or visible, sustained malfunction; colloquially called in some bibliography hindenbugs. But there is a more special and elusive kind of software bug: the glitch. A glitch is a slight and often temporary fault. Hard to reproduce, at times almost imperceptible from the operator’s point of view, glitches are the quintessential headache of any programmer. Now you see it, now you don’t. Let alone if the glitch appears to be a heisenglitch which is a type of glitch where the observation changes the behavior: looking for the glitch changes the results of the program, making the glitch not to reveal itself.

Software glitches are often opportunistically invoked by engineers as the potential cause of any mysterious occurrence when something happens—or someone claims that something has happened—which cannot be easily explained.

— Weird. Maybe it was just a glitch

“Just” a glitch?

Years ago, while observing the telemetry dashboard of a satellite—a screen full of time-series data represented in a myriad of colorful line plots depicting the trends of all relevant variables monitored on-board—I noticed something odd. Some plots were showing brief “jumps” in their values, only to come back to the trend it was following right before the jump. It was most visible on plots which were smooth sine waves, like for example quaternion elements, where it could be clearly observed the curve being very briefly interrupted but these abnormal hiccups. The glitch was in some way “harmless” (only a visual artifact) which contributed to the fact no one had complained about it, but it clearly indicated there was something wrong. Which is the very nature of many software glitches. They might not be causing a major problem, but their presence indicates there is something that is not nominal. Glitches must be treated seriously, ruthlessly chased down and sorted out.

So, I collected a good amount of telemetry data and I went home to analyze it in depth. The hiccups were seen on most values coming from one particular subsystem connected to the on-board computer by means of a serial port (UART). So the problem was more or less circumscribed to that particular subsystem, somewhat contained, which was good news. Said subsystem was packing all its telemetry frame in a fixed-length binary chunk composed of something like 4 kilobytes, and the unpacking of the frames was carefully done by the telemetry processing on the ground, converting all values into “human readable” units.

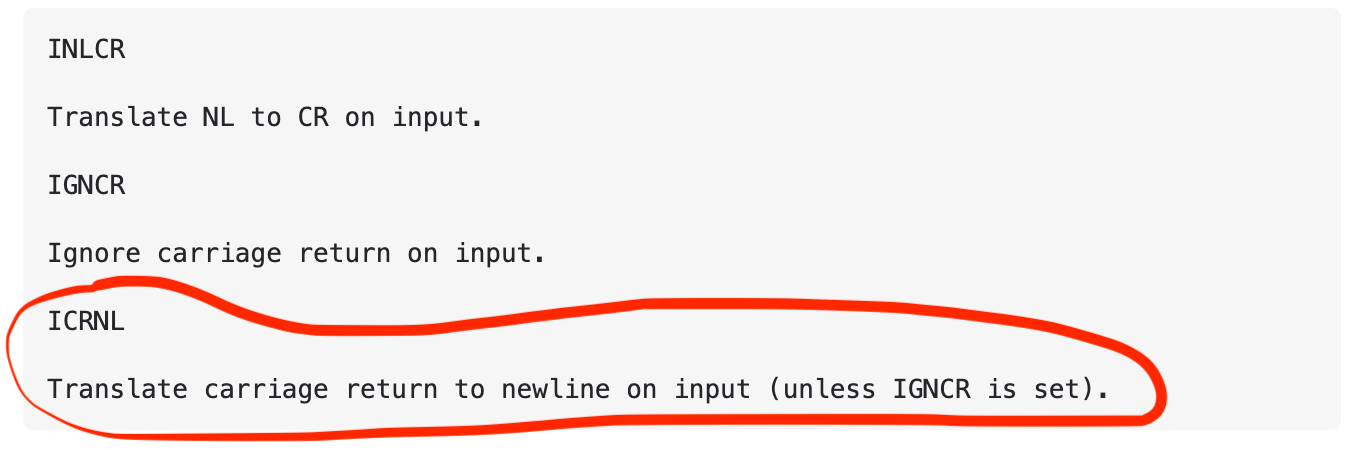

Then, while taking a histogram of the binary data (just to observe if all values of bytes were more or less happening reasonably frequently, something that is usually a good idea to do), I observed one particular value out of 255 possible values of a byte that was missing. There was no occurrence whatsoever of the byte valued 13 in decimal (0x0D in hex) at all in the several gigabytes of telemetry I had taken home with me. Definitely strange. What is more, the missing byte was an important value used in serial terminals as carriage return (CR). Carriage Return is the ASCII character sent to the terminal when you press the enter key on your keyboard while connected to a serial line, tracing back to the times where typewriters roamed the earth. What is more, it appeared as if something was replacing the missing CR character for a newline character (0x0A). Then, it all came together rather quickly by checking the configuration of the serial port attributes:

Bingo. It turned out the UART peripheral inside the on-board computer CPU was doing some very inconvenient translations of characters by default. Disabling this “feature” made the glitches disappear forever. Another one bit the dust.

This story in particular had the advantage that the glitch was quite visual and it appeared repeatedly thanks to the “persistence” and trail the line plots provided. Some other glitches are not as simple to spot or to remember, and they might also be what some bibliography call a mandelbug which is a bug that is so complex that it occurs in a chaotic or nondeterministic way. Consequently, a mandelglitch is the hardest of them all: hard to detect, brief, chaotic, quasi-random. At times, mandelbugs may show fractal behavior by revealing more and more bugs: analyzing the code to fix a bug only uncovers more bugs.

All in all, software glitches are a serious problem and they deserve the same attention as their more famous cousins the bugs. In fact, there should not be a separated terminology, a glitch is a bug, regardless of how brief or harmless it might appear to be.

In general, software does exactly what it is told to do. The reason software fails is that it is frequently told to do the wrong thing. That is not because of programmers’ incompetence but because one of the biggest, if not the biggest, challenge in software development: the barrier between the way we create software and its behavior. Creators benefit from an immediate connection to what they’re creating. The challenge with programming is that it violates this principle; the programmer, staring at a page of text, is abstracted from whatever it is they are actually making. That’s why software systems are hard—and becoming increasingly harder—to understand.

1. “Anything that can go wrong, will—at the worst possible moment. —” Finagle’s Law of Dynamic Negatives

2. https://ieeexplore.ieee.org/document/4085640

Follow ReOrbit on LinkedIn and Twitter for regular updates!

Contact us at info@reorbit.space

Hola Ignacio! I enjoyed reading your post. Timely for me, as I am trying to hunt down a glitch affecting data acquisition from one sensor in our weather station.